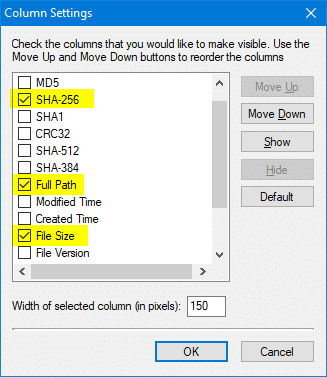

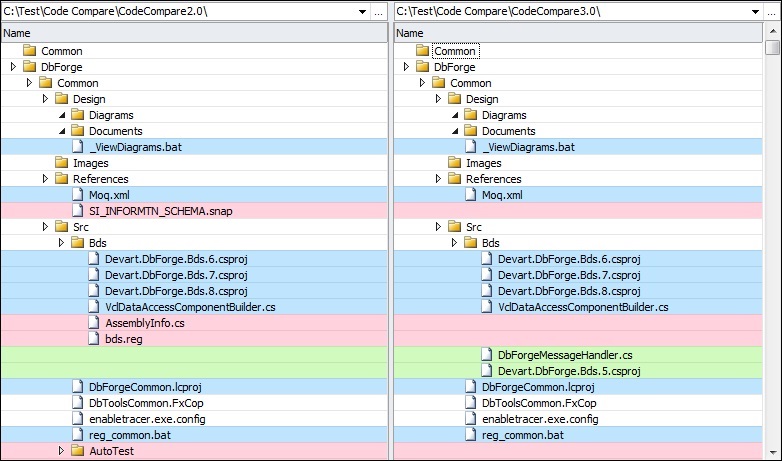

It would make a lot more sense to replace that one tool with one that understands folders than to build a Rube Goldberg machine to auto-sync folders into a flat list. Many enticing options that are useless because it won't access any of your files or folders. I mean, it doesn't even show any files, and there are hundreds in each folder. With this and your other thread (which are both really the same question, I think?), it seems like you are layering on more and more tools and complexity in an attempt to work around a fairly ridiculous limitation of the "database" tool you are using. Beyond Compare can be used to compare both files and folders. It will bring up a new tab where it has differences in common files, a list of the files that are only in the first folder, and a list of files that are only in the second folder. Right click and choose the new extension menu item. Synching the copies is the same job as synching the originals, and that's the part that's problematic. You open up a folder/project in Visual Studio Code, then select the two folders for compare. There is an FC command but when run in the Power Shell I think it. What is the best way to do a byte by bye comparison of two large files - Windows 10 64 bit. Is there a way to do a hash compare of both folders, and then for it to tell. What would adding a TeraCopy copy-and-verify to an intermediate folder get you, other than more complexity? You'd just be duplicating your source folders and then synching the duplicate to your destination, which doesn't seem to get you anywhere. The problem is that in the second folder, the files are named very differently. First, there are two ways to do the content comparison. There's no underlying "basic compare command", it's just the way people want synching to work in almost all cases, and the only way synching can work in the general case (where two files may have the same names in different directories and thus cannot be resolved to a single flat destination).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed